“Synthegy addresses reaction mechanisms in a similar way: It breaks reactions into elementary electron movements and explores multiple possibilities. The LLM assesses each step, guiding the search toward chemically plausible mechanisms. Additional information, such as reaction conditions or expert hypotheses, can also be incorporated as text.

In synthesis planning, Synthgey successfully identified routes that match complex strategic requests. In a double-blind expert study, 36 chemists provided 368 valid evaluations, and their judgments aligned with the system’s assessments 71.2% of the time on average. The framework can detect unnecessary protecting steps, assess reaction feasibility, and prioritize efficient pathways.

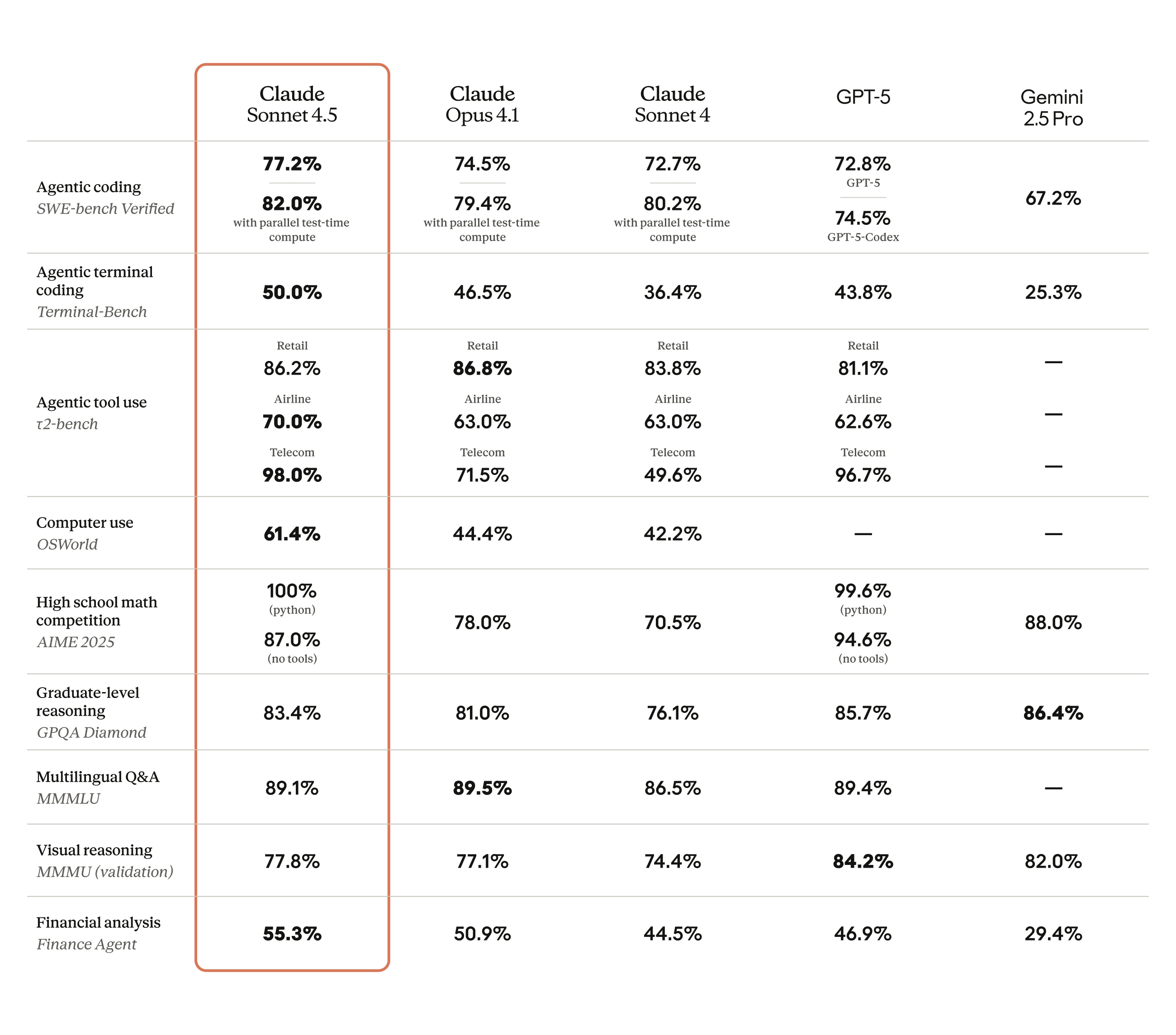

Synthegy shows that LLMs can analyze chemistry across multiple levels. They can interpret functional groups, assess individual reactions, and evaluate complete synthetic pathways. Larger and more advanced models demonstrate the strongest performance, while smaller models show limited capability.”

Source : AI helps chemists design molecules step by step – EPFL AI Center