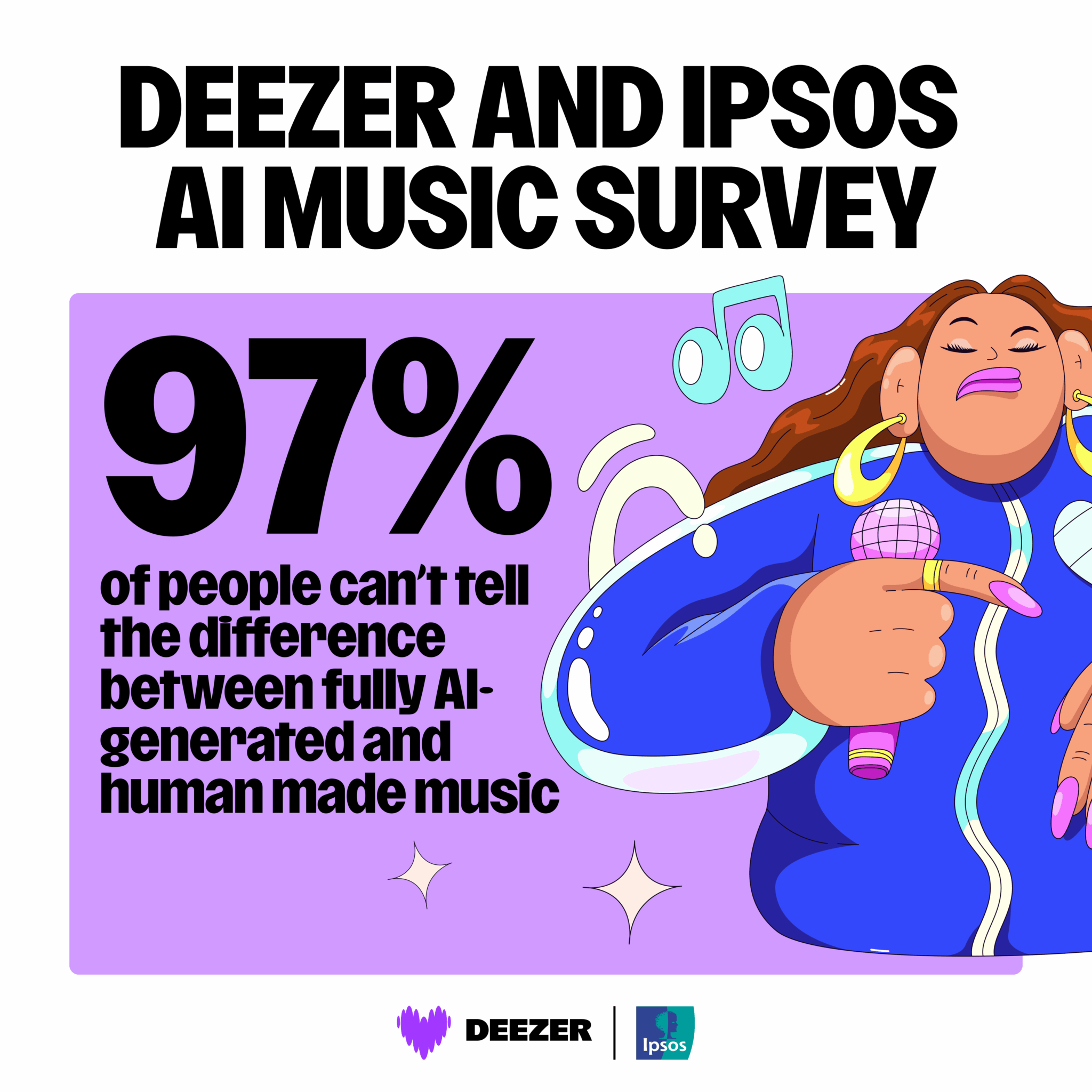

“Initially, all participants were asked to listen to three tracks and determine whether or not they were fully AI-generated – 97% of the respondents failed. A majority (71%) of the respondents were surprised by these results and more than half (52%) felt uncomfortable by not being able to tell the difference. All key results from the survey can be found below.

Deezer has taken an industry-unique approach towards AI music, championing the rights of artists, while ensuring transparency for music fans – the company is so far the only streaming platform to detect and clearly tag 100% AI-generated content for its users.”